How to configure your system to use GPU

This page provides the steps you need to take to configure Ozeki AI to use your GPU, provided in both a cheat sheet and a detailed variaton. After the settings are done, all your local AI models will run on your GPU, unlocking better performance of your model. The article will not even take 3 minutes to read and reproduce the steps, meaning it is a great use of your time. Let's break it down!

What is a GPU?

A GPU (Graphics Processing Unit) is a piece of hardware in your computer that’s responsible for handling graphics and visual output. Originally designed for gaming and rendering images, GPUs are now also used for a broader range of operations due to their ability to efficiently handle large-scale data processing.

What kind of GPU should I use?

In order to choose a suitable GPU, there are two main parameters to look at:

- GPU RAM: This is the most important parameter. This limits the size of the model you can load.

- CUDA cores: The number of cuda cores determines the speed at which the answers are generated.

For beginners, or entities with limited budget, the minimum GPU we recommend is the NVidia GeForce RTX 3090. This GPU will efficiently run an LLM with 8 billion parameters.

Changing AI hardware in Ozeki AI Studio (quick steps)

- Switch to Nvidia GPU processing in Ozeki AI studio

- Restart the Ozeki Service

- Increase the number of GPU layers

- Check if the GPU is used during inference

Changing AI hardware in Ozeki AI Studio (step-by-step instructions)

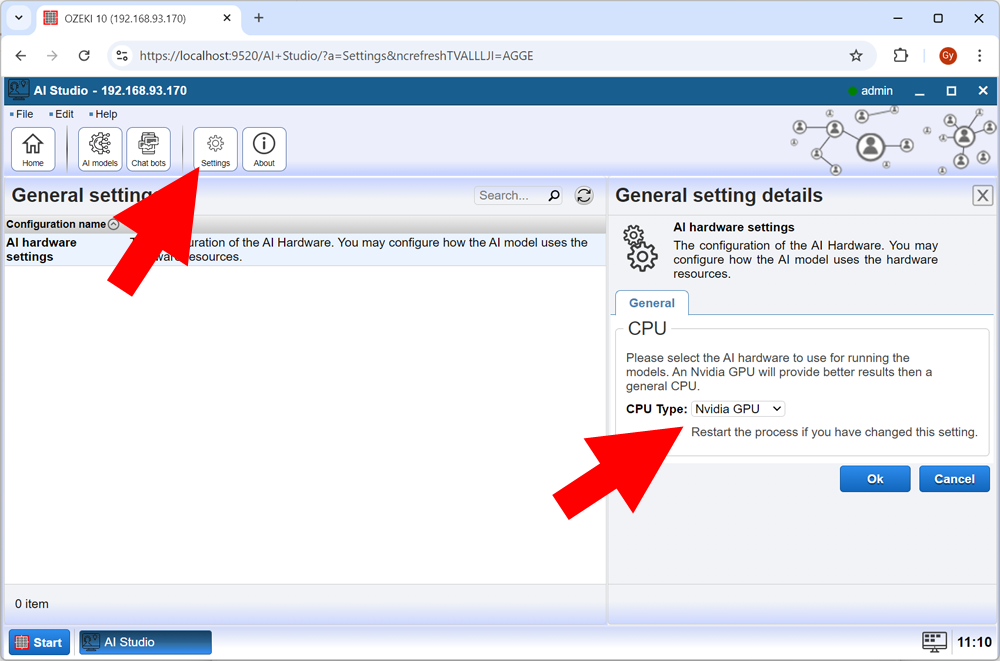

First, open AI Studio inside OZEKI 10. Look for Settings among the buttons and click it. Under General settings, locate AI hardware settings, click it.

A panel should appear in the right side of the screen, just like Figure 1. Open the dropdown list next to CPU Type, and select Nvidia GPU, and hit OK.

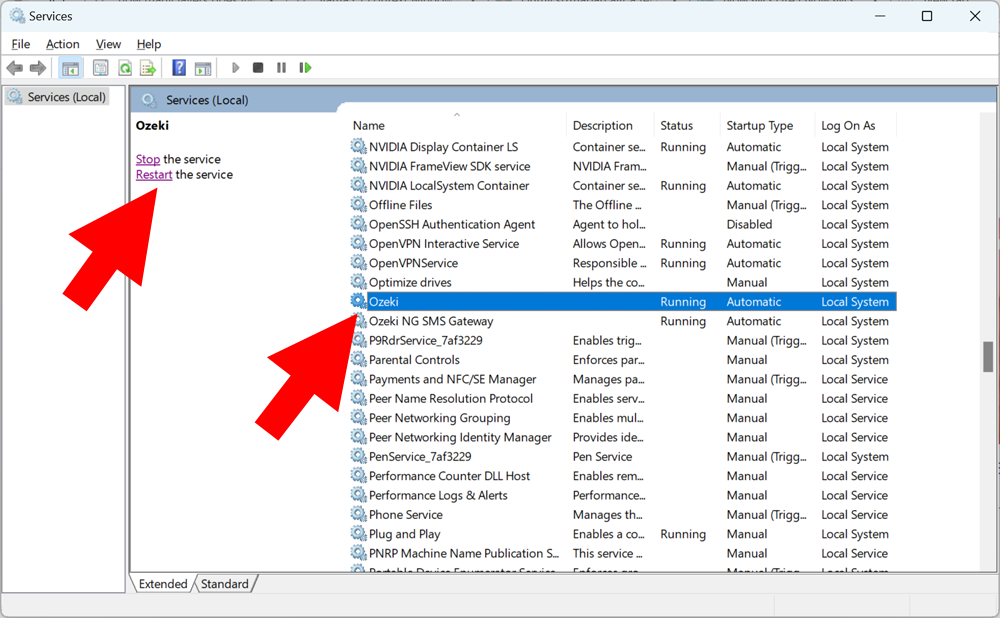

To apply this setting, you need to restart the Ozeki Service. Access the Services tab inside Windows. There are multiple ways to do this, but for the sake of this tutorial, we recommend you hit Windows+R on your keyboard, and type services.msc in the pop-up window.

Scroll down until you locate a service called Ozeki. Click it, and click Restart, slightly to the left, as seen in Figure 2.

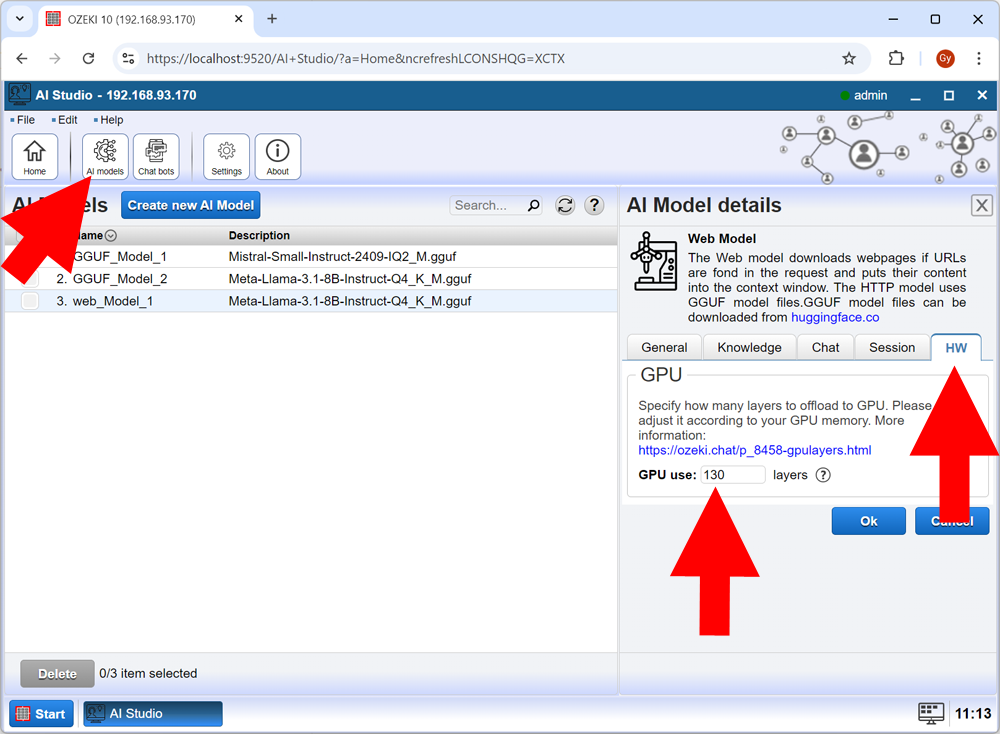

In order to optimize the performance of your LLM, you can offload certain layers of the model to your GPU. Calculate the number of GPU layers to offload for the best possible efficiency in your situation.

Head back to AI Studio. This time, look for a button called AI models, and click it.

Select your desired model.

In the right panel, look for the HW tab (short for hardware), and open it. Enter how many layers you wish to offload to your GPU in the textbox next to GPU use, then hit Ok, just like in Figure 3.

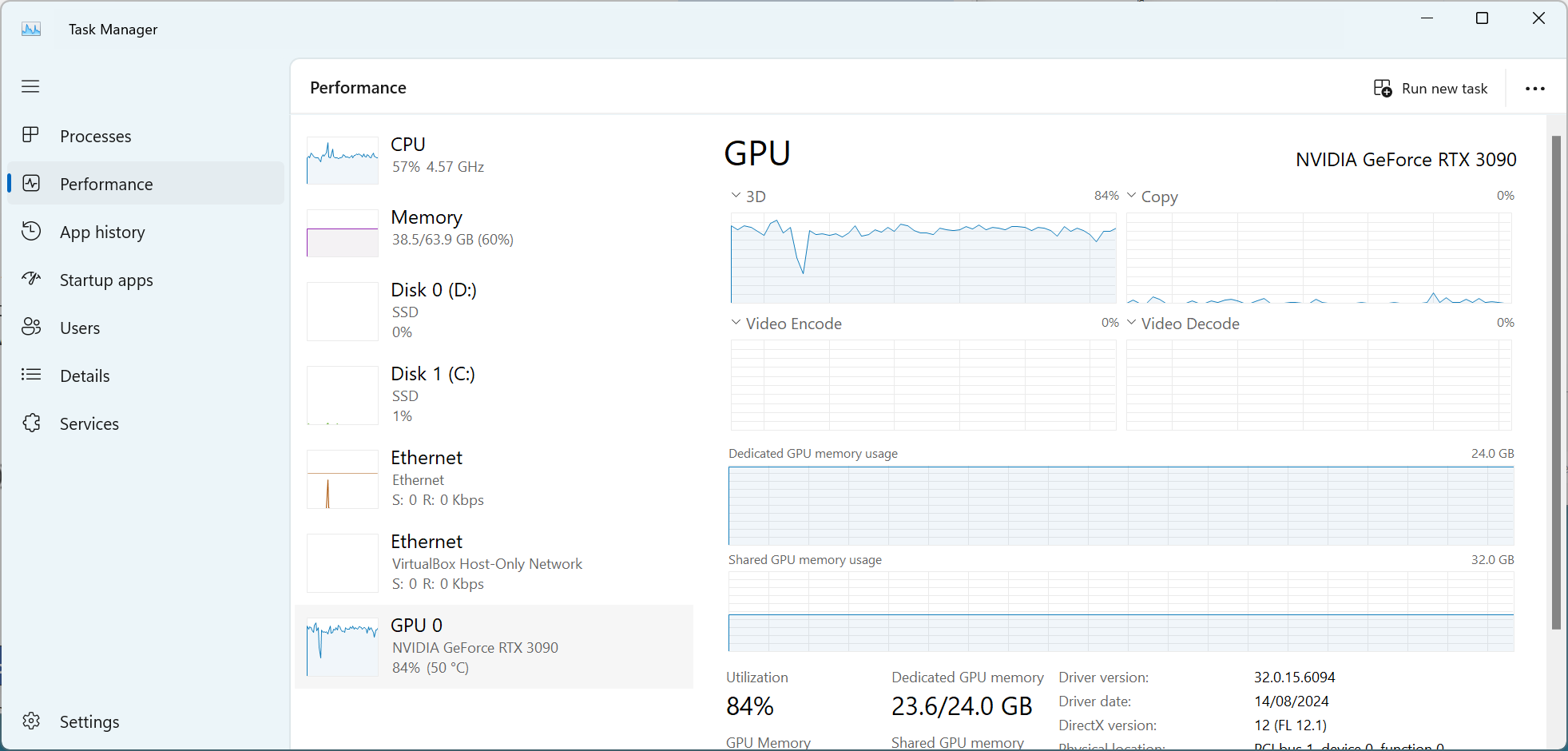

To see if you were successful (meaning your GPU memory usage would be higher), open the Task Manager, by pressing Ctrl+Shift+Esc on your keyboard.

Open the Performance tab, and click your dedicated GPU (in this case, that is NVIDA GeForce RTX 3090).

If your graphs look similar to the ones in Figure 4, congratulations! You have successfully set your GPU as your AI hardware.

What are the benefits of using the GPU over the CPU for AI tasks?

GPUs offer faster processing by handling many tasks in parallel, making them ideal for AI tasks like training large models. They’re optimized for deep learning frameworks and can handle large datasets more efficiently than CPUs, leading to better performance and scalability.

What happens if I offload too many layers to the GPU?

Offloading too many layers can cause GPU memory overflow, slower performance, or system crashes. It’s important to balance the number of layers based on your GPU’s capacity to maintain stable and efficient processing.

More information

- Understanding AI model files

- Which hardware can run AI

- How to setup NVIDIA GPUs for AI on Windows

- How to tell if the CPU or the GPU is used for AI

- How to calculate the number of GPU layers to use